In your Python terminal try executing this

Weird is it not? The answer should have been True. Every programmer would have faced this issue at some point in time. If they have not, they are bound to face it in the future. As to the question ‘Why is this happening?’, first, we must understand how floating point numbers are represented inside a computer.

This is not a bug in Python or any language as a matter of fact. It is caused by an inconsistency between the different number systems. Read on for a further explanation.

First: What are floating-point numbers?

Floating-point numbers represent fractional numbers (fractions in short and are used in most engineering and technical calculations. For example, 3.256, 2.1, and 0.0036 are floating-point numbers. Thus 0.1 can be expressed in its fractional form as 1/10 and 0.2 as 2/10. When we take the sum of these two fractions we get, 3/10 which is 0.3.

Fairly straightforward is it not? That is because we use base-10 or the decimal number system for everyday calculations. The base-10 number system uses numbers from 0 to 9 for representations and calculations. Thus 0.1 + 0.2 = 0.3. When it comes to computers, they understand higher-level programming languages like Python, Java, C, by converting them and storing them in the base-2 number system or the binary number system that comprises 0s and 1s.

Base-10 vs Base-2: The showdown!

Consider 1/3 in decimal. The decimal is a recursive fraction that goes 0.3333333. Similarly, 2/3 is 0.66666666. Taking the sum of the two we get 0.99999999, not 1. Since the base of the decimal system is 10, only fractions with denominators containing either of the prime factors of 10 which are 2 and 5 can be represented precisely. Similarly, only fractions with denominators containing only a prime factor of 2, which is 2 itself can be represented precisely in the binary system. Unfortunately for most decimal numbers, there is no precise representation in the binary number system.

Back to 0.1 + 0.2

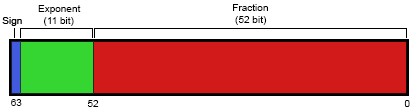

In base-2, 1/10 is an infinitely repeating fraction 0.0001100110011001100110011001100110011001100110011.... The same is the case for 2/10 which is 0.001100110011001100110011001100110011.... Stopping at any finite number results in an approximation. On most machines today, floats are approximated using the IEEE double-precision floating-point standard representation which uses 64 bits for representations.

The first bit is the sign bit, S, the next 11 bits are the exponent bits, E, and the final 52 bits are the fraction F. Accordingly the closest decimal value of the truncated value 0.1 would be 0.1000000000000000055511151231257827021181583404541015625 which is close to 0.1 but not exactly equal. Similarly, for 0.2 it is 0.200000000000000011102230246251565404236316680908203125.

Most programming languages return 0.30000000000000004 as the sum of 0.1 and 0.2. But interestingly enough you can see that 0.1000000000000000055511151231257827021181583404541015625 + 0.200000000000000011102230246251565404236316680908203125 is not equal to 0.30000000000000004. This is because when a machine adds 0.1 and 0.2 it only sees the truncated binary values of 0.1 and 0.2. And the best approximation to the sum of these two binary numbers is, unsurprisingly, a value just above 0.3: approximately 0.30000000000000004.

But wait there’s more!

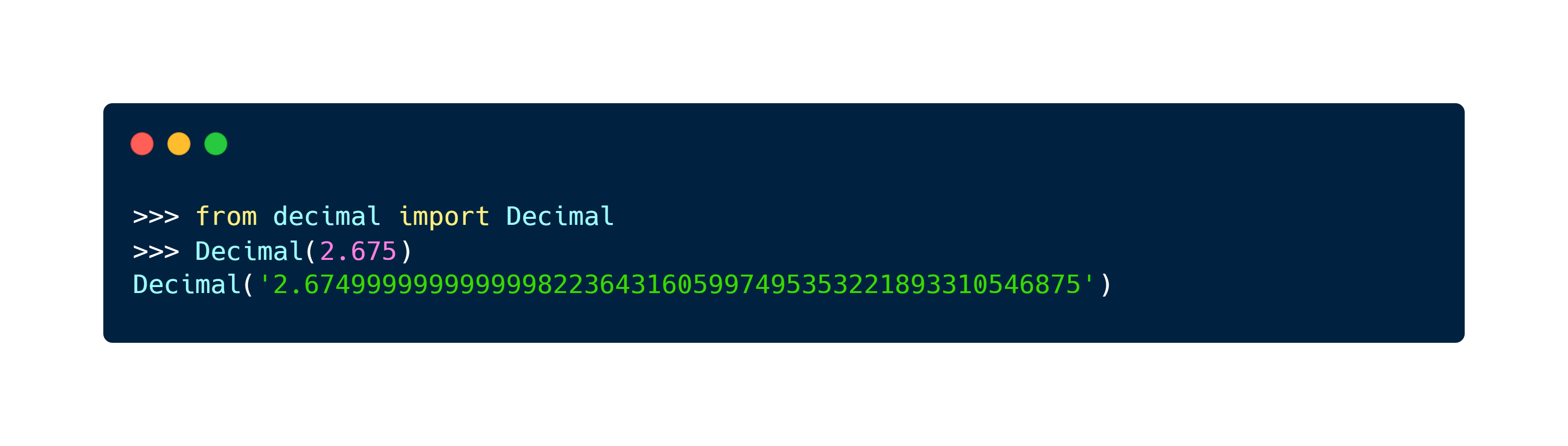

These approximation errors creep up when you try to round numbers too! You would expect rounding 2.675 to two places after the decimal point would return 2.68. But no! It returns 2.67, as 2.675 converted to a binary floating-point number is again replaced with a binary approximation, whose exact value is 2.67499999999999982236431605997495353221893310546875 which is closer to 2.67 than 2.68.

A solution in Python

When working with decimal calculations what is considered to be a good practice is to always round the decimals to the number of positions of your programming requirement. In scenarios where it matters which way the decimals are rounded or the accuracy of floating-point arithmetic, Python has a module named decimal.

Unless you are handling projects which determine flight paths or the integrity of a nuclear power plant or processing the financial information of companies, floating-point approximations are not that big of an issue. When you do floating-point calculations in your language, it is wise to be mindful rather than oblivious about these.

Happy coding!